You've used ChatGPT, Claude, Gemini, or one of the dozens of AI tools floating around. You typed something in, got an answer back, and thought: 'Wow, this is smart.'

I need to break something for you.

It's not smart. It's a guessing engine. A sophisticated, well-fed, impressively fast guessing machine, but a guessing machine all the same. The difference between people who use AI well and those who use AI badly comes down to whether they understand that.

I use AI heavily. I've used the best models on the market and the worst. I've run AI locally on my own hardware and in the cloud. I've fine-tuned models and run pre-training jobs. I've seen what happens inside these systems, and I want to show you what's happening when you type a prompt and get a response.

What happens when you ask AI a question

When you type 'Write me a marketing plan for my new SaaS' into an AI tool, the system doesn't sit down, think about SaaS, consider your target market, and craft a strategy. That's what a human does. An AI appears to do that, but it does not.

What the AI does is this: it looks at your words, then predicts what word likely comes next. Then the next. Then the next. Over and over, thousands of times, until it's produced something that looks like a marketing plan.

That's the entire trick. Well, not a trick, but you get the point.

Here's an analogy. Imagine you've read every book, article, and blog post ever written about marketing. You didn't understand any of it. You don't know what marketing is. But you memorised every pattern of words: which words tend to follow which other words, which sentences tend to appear in which contexts, which paragraphs tend to come after which headings.

Now someone asks you to write a marketing plan. You can't think about marketing, because you don't understand it. But you can produce something that looks exactly like a marketing plan, because you've seen the pattern a million times.

That's AI in a nutshell. It's pattern matching at an extraordinary scale.

The maths behind the magic

The technical details

Large language models are neural networks trained on massive text corpora. During training, the model learns probability distributions over sequences of tokens. When you give it a prompt, it performs a forward pass through the network and produces a probability distribution over the vocabulary for the next token. It samples from that distribution (with temperature, top-k, or top-p adjustments), appends the result, and repeats.

The key phrase is probability distribution. The model assigns a likelihood to every possible next word, then picks one. Sometimes it picks well. Sometimes it doesn't. But it never 'knows' what it's saying. There is no comprehension layer. No reasoning engine. No understanding. Some researchers have found that models build internal representations that look like more than surface-level pattern matching, and the debate is ongoing. But what you interact with when you type a prompt is, at its core, a prediction engine.

It's statistics and probability from start to finish.

An 80-billion parameter model has 80 billion numerical weights, each one adjusted during training to make the model's predictions slightly more accurate. Those weights encode patterns about language. They don't encode understanding of the world. The model can tell you 'the capital of Ghana is Accra' not because it knows what a capital city is, but because the pattern 'capital of Ghana' was overwhelmingly followed by 'Accra' in its training data.

Today's models go through additional training stages. Reinforcement learning from human feedback (RLHF), chain-of-thought prompting, tool use, and retrieval systems built on top. These make the outputs better. They don't change the fundamental mechanism underneath. At the base of every response is token prediction.

Why your results look so good

This is where people get fooled.

AI has ingested more text than any human could read in a thousand lifetimes. Wikipedia, textbooks, research papers, code repositories, news articles, forum discussions; all compressed into those billions of parameters. When it produces output, the output is informed by an absurd volume of information.

The breadth of data is what makes the results impressive. Not intelligence.

Think of it this way: if you gave a human access to every document ever written and asked them to synthesise an answer, they'd produce something excellent too. AI does a version of that, except it doesn't synthesise the way you do. It predicts which words should come next based on patterns it's seen before.

This is why AI tends to take the path of least resistance. It defaults to the most common, most predictable response. The one with the highest probability. The safe answer. The obvious answer. The 'you are right' answer. The answer that looks right because it matches the patterns most frequently seen in training data.

And for most people, that's enough. The output looks polished. It sounds confident. It covers the main points. So they accept it, submit it, and move on.

That's the trap.

The output is never the full picture

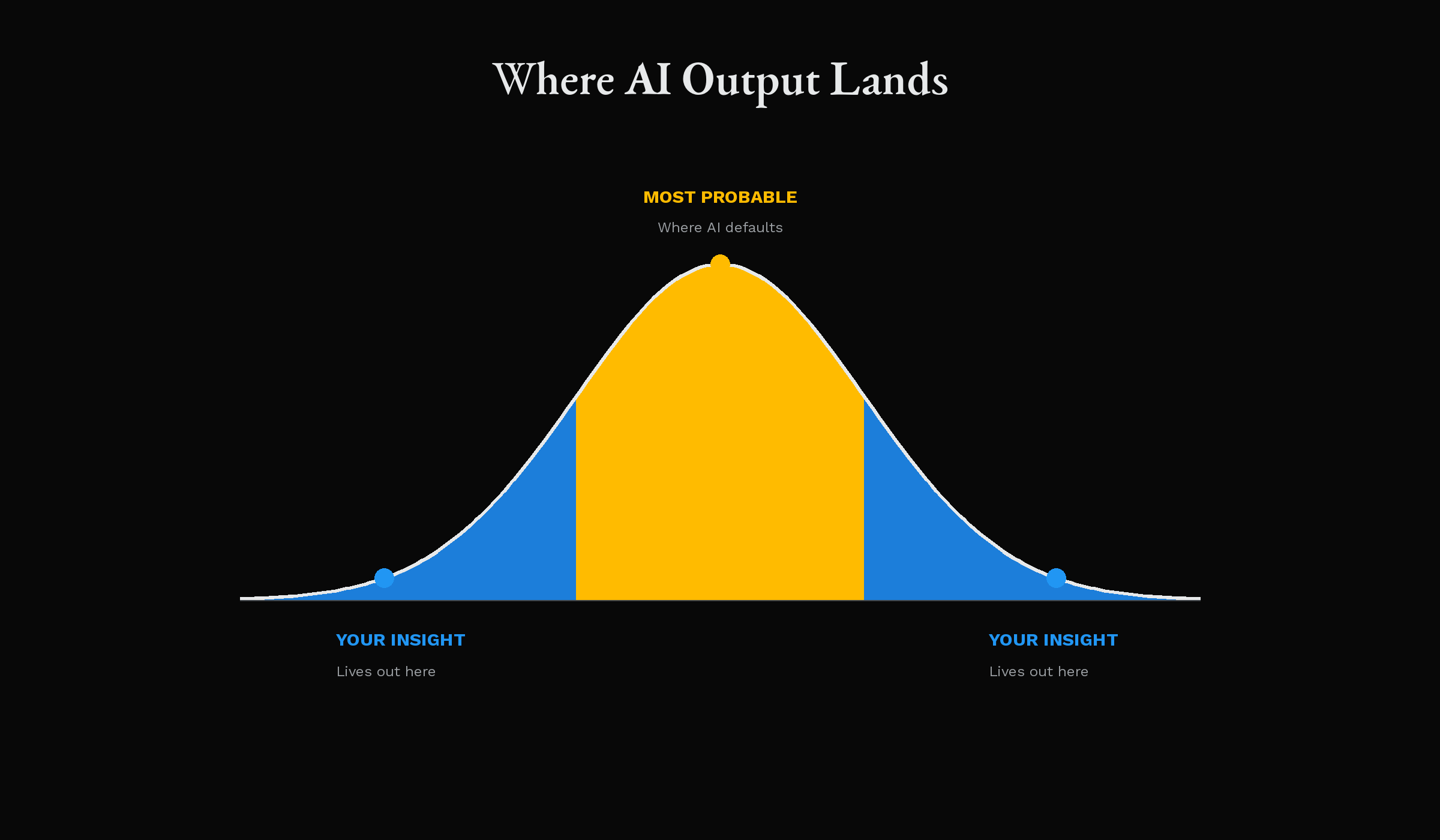

I've learned this from years of working with these systems: whatever output AI gives you is almost never the complete answer. It's the most probable answer, which is a fundamentally different concept.

The most probable answer is the one that fits the patterns best. It's the average of everything the model has seen. It's the consensus view, the safe take, the thing that would get a B+ on an exam. It's rarely the insight that changes how someone sees a problem.

Ask AI to write a business proposal and it gives you the proposal structure it's seen most often. Ask it to debug your code and it suggests the fix most commonly associated with the error pattern. Ask it to draft an email and it produces the phrasing most people use in that context.

You get the median outcome. Not the best one.

The gaps, the things AI misses, are where your value as a human lies. The context it doesn't have about your specific situation. The creative leap it can't make because creativity goes beyond pattern recombination. The judgement call about what matters most to the person who'll read your work. The lived experience that tells you this approach won't work in your market, your team, your culture.

AI can't do any of that. You can.

The ownership illusion

I want you to think about something.

When you use AI to produce work and then present that work, you say 'I did this.' You say 'here's my proposal' or 'I wrote this report.' There's a reason you claim ownership, and it's worth interrogating.

If you didn't review it, didn't think through it, didn't challenge it, didn't improve it, you didn't do the work. You operated a tool and delivered its raw output. That's not the same as doing the work.

And people can tell. Hiring managers can tell. Clients can tell. Colleagues can tell. AI-generated output has a flatness to it, a sameness, because it converges towards the statistical middle. Every AI-written email sounds like every other AI-written email. Every AI-drafted strategy reads like a textbook summary. The fingerprints of probability are all over it.

How to use AI without losing your edge

Here's my advice, and it's simple.

Every time AI gives you output, treat it as a first draft written by someone who has read everything but understood nothing. Because at a mechanical level, that's exactly what it is.

Use AI as a thinking partner, not a ghostwriter

Pull information out of it, challenge its output, and build your own solution on top. The people who'll thrive in a world full of AI are the ones who recognise when the model is wrong, when it's lazy, and when it's given them the most obvious answer instead of the right one.

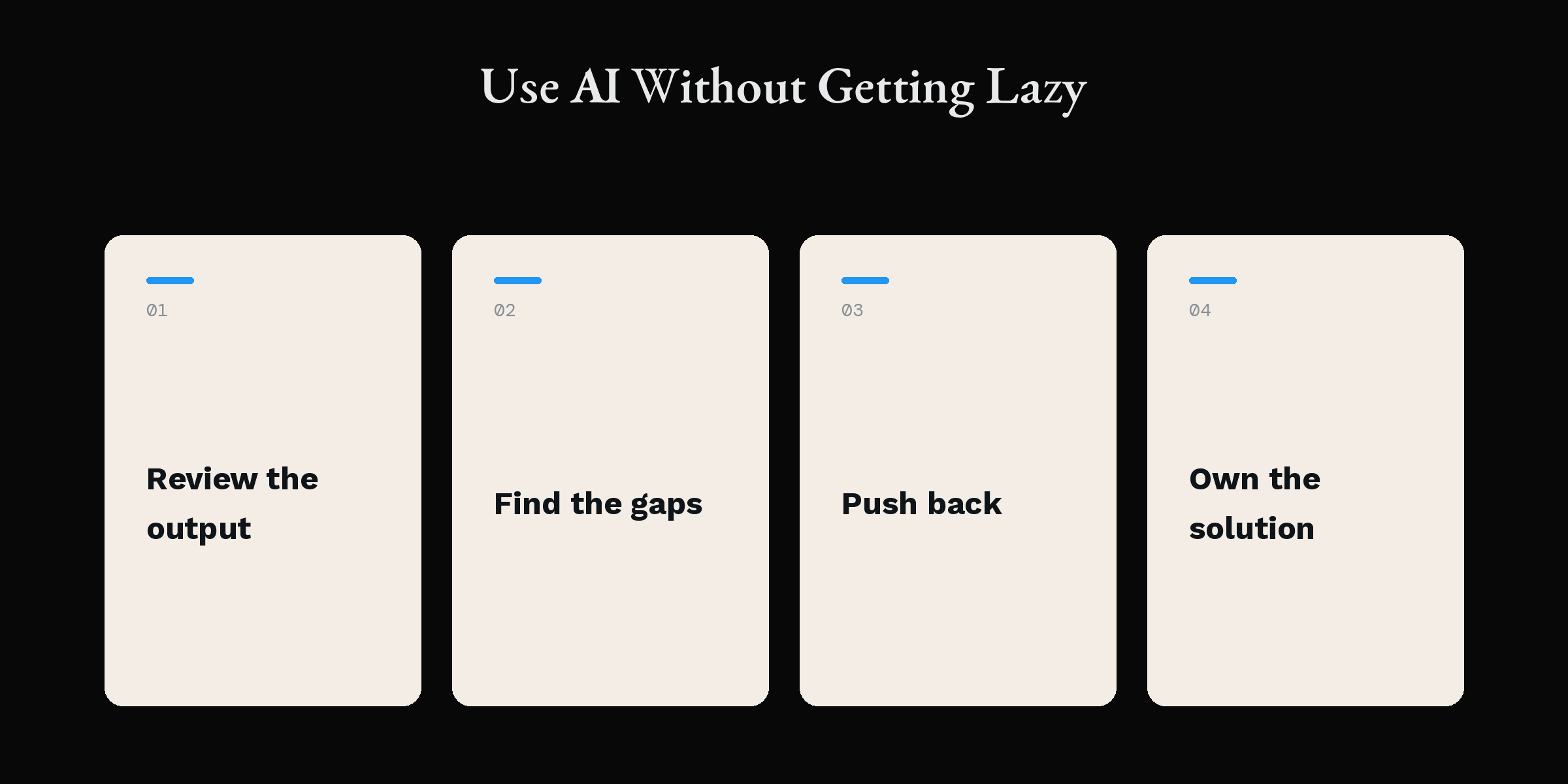

- 1

Review the response with fresh eyes

Read the output slowly. Ask yourself: what did the AI miss? What context does it lack? What would someone with deep experience in this area add? Where is the output generic, and where does it need to be specific?

- 2

Think with your own brain

Get up. Walk around. Think about the problem with your own mind; the one that has judgement, experience, taste, and the ability to reason about things it's never seen before. AI can't do that. You can.

- 3

Push back on the AI

Give feedback. Challenge the output. Ask it to consider angles it missed. Tell it where it's being generic. Force it into specifics. The harder you push, the more useful the output becomes.

- 4

Keep your edge sharp

The goal isn't to work faster by accepting whatever the model produces. The goal is to use AI as a research partner that accelerates your thinking while you retain the judgement and creativity that make your work yours.

AI is a tool. Your brain is the product.

AI can surface information faster than any human. It can scan enormous datasets, identify patterns, and produce structured output in seconds. Use it. It's powerful.

But speed isn't quality. Volume isn't insight. Probability isn't truth.

Your ability to think through problems, to feel what's missing, to know your audience, to apply judgement from years of lived experience; these will always produce better solutions than raw AI output.

The question isn't whether to use AI. Use it. Lean on it. Let it accelerate your work. I'm a proponent of AI.

The question is whether you'll let AI do your thinking for you, or whether you'll use AI to think better.

One of those paths makes you dependent on a prediction engine. The other makes you someone who produces work that matters.

Choose carefully.