March 6, 1957. Ghana became the first sub-Saharan African country to gain independence from colonial rule. Today, 69 years later, if you try to build a search engine that indexes content in Twi, Ewe, or Dagbani, you'll find that the tools to do it properly don't exist.

I know this because I tried.

How I got here

Two years ago, I started building a local search engine. Nothing fancy at first. Over time, it grew into something I relied on across multiple production systems: full-text search, vector hybrid retrieval, auto-partitioned indexes, and cross-language binary serialisation. It did its job well.

Recently, I started turning it into something bigger: a distributed search engine with parallel query execution and production-grade features. The project is already open-source, and I'm actively building it in the open. This is where the problems started. When you're building for a wider audience, you need language support to be right. Not close. Right.

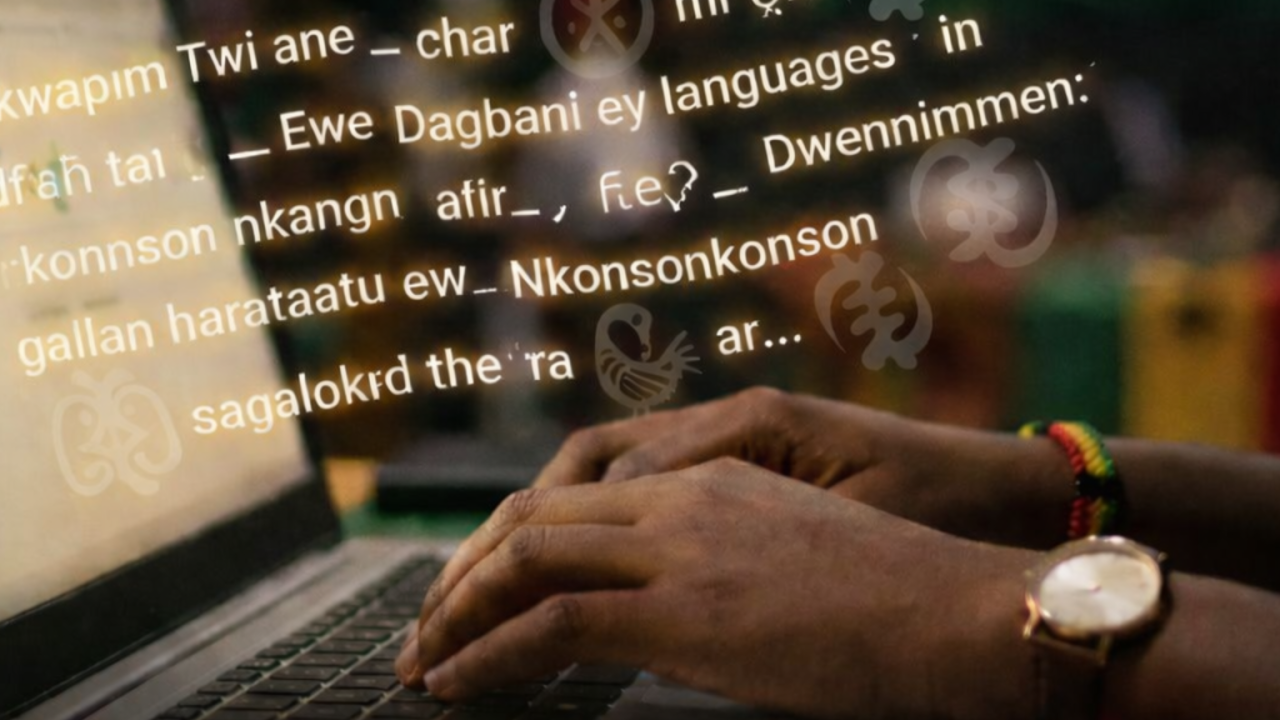

English worked. French worked. German, Spanish, Portuguese, all clean. Then I tried Twi, and everything fell apart.

So what broke?

Search engines need stemming algorithms. Stemming takes words like 'running', 'runs', and 'ran' and maps them all back to 'run'. Without it, the engine sees each of those as a completely different word. Your search results become fragile and useless because the engine can't tell that these words are related.

Martin Porter published the first widely used stemming algorithm in 1980. His Snowball framework now covers a long list of languages, built up over forty-six years of work.

Languages supported by Snowball stemmers

English, French, German, Spanish, Italian, Dutch, Russian, Portuguese, Arabic, Swedish, Danish, Norwegian, Finnish, Hungarian, Romanian, and more. European, Scandinavian, Slavic, and Semitic languages are well represented. For Twi? Nothing. For Ewe? Nothing. For Dagbani, Yoruba, Igbo? Nothing. No stemmer in Snowball, not in NLTK, not in spaCy. Nowhere.

The same story repeats with stopword lists. These are the common words ('the', 'is', 'and') that search engines filter out so they can focus on the words that carry meaning. NLTK ships stopword lists for 21 languages: Arabic, Azerbaijani, Danish, Dutch, English, Finnish, French, German, Greek, Hungarian, Indonesian, Italian, Kazakh, Nepali, Norwegian, Portuguese, Romanian, Russian, Spanish, Swedish, and Turkish. Not a single African language.

The African Stopwords Project launched in 2023 to start fixing this. They've curated about 60 stopwords for Yoruba so far. Twi, Ewe, and Dagbani aren't even on their list yet. And none of this work has made it into the toolkits that developers reach for when they're building software.

The work is happening, but it's not enough

I don't want to sound like nobody is doing anything, because that's not true. The Masakhane community has built named entity recognition datasets covering 20 African languages, Twi and Ewe included. NLP Ghana and Algorine built the Khaya app for translation and speech recognition in Twi, Ewe, Ga, Yoruba, and Dagbani. A 2024 study published in Springer introduced a Twi-English parallel corpus for machine translation. Researchers at the University of Ghana have produced speech datasets for Akan, Ewe, Dagbani, Dagaare, and Ikposo.

That work matters.

But all of it focuses on translation, speech recognition, and entity recognition. The layer beneath, the foundational infrastructure that makes search engines work (stemmers, tokenisers, stopword lists), is still empty.

You can't borrow English stopwords for African languages

A 2013 study by Asubiaro built a stopword list for Yoruba using entropy-based methods and found that only 69.1% of Yoruba stopwords overlap with English ones. The languages are too different. Each one needs its own stopword list, built from scratch by people who speak it.

That's my problem right now. I can get partway there. If you speak a language, you can sit down and curate a stopword list from your own knowledge of it. I can ship partial support that way. But stemmers are a different story. A stopword list is a list. A stemmer is an algorithm, and you can't write one without documented morphological rules that go deep enough to turn into code. That's the part that's missing, and it's the part that makes the biggest difference in search quality.

These languages don't work like European ones

These aren't cosmetic differences. The structure of these languages makes European stemming approaches useless.

Akan has serial verb constructions, where multiple verbs combine into a single expression. Balmer and Grant coined the term 'serial verb' in 1929 when they wrote the first grammar of Fante Akan. Linguists have studied this phenomenon for almost a century, but nobody has written an algorithm that a search engine can use to break these constructions apart.

Dagbani classifies nouns using suffixes. Not prefixes like Bantu languages. Suffixes that mark whether a word is singular or plural, what semantic category it belongs to, whether it's functioning as a noun or adjective. A 2023 paper in Topics in Linguistics showed how these suffixes do far more than pluralisation. They shape the meaning of words in ways that a simple 'chop off the ending' stemmer would destroy.

Yoruba is tonal. The word 'oko' without tone marks could mean 'farm', 'husband', 'hoe', 'vehicle', or 'spear'. Five different words that look identical on screen. Most software strips the diacritical marks that distinguish them. So a Yoruba speaker types a word meaning 'husband' and the search engine has no idea if they meant that or four other things.

Ewe has similar tonal complexity, and the rules for how its prefixes and suffixes interact with tone aren't documented deeply enough for anyone to write an algorithm from them.

What these languages need before software can support them

Proper morphological documentation. How do suffixes chain together? How does tone change meaning? How do verbs serialise? That documentation, at the depth a programmer needs to turn it into code, doesn't exist yet. Without it, no one can build the stemmers, tokenisers, or morphological analysers that search engines and NLP pipelines depend on.

Who decides when our languages go digital

This is the part that sticks with me.

In November 2024, Google announced Twi support for Voice Search, Gboard, and Google Translate. They built it through their AI Research Centre in Accra as part of a rollout covering 15 African languages. Good. I'm glad they did it.

But think about what that means. A company in Mountain View, California gets to decide when Twi becomes a language that technology recognises. They set the timeline. They set the priorities. They choose which languages come first and which ones wait.

Akan has over nine million speakers. Ewe has 3.8 million. Dagbani has 1.2 million. These aren't dying languages. Millions of people speak them today, right now, in homes and markets and schools and churches. But their digital existence depends on when a Silicon Valley company gets around to it.

Kwame Nkrumah didn't wait for the British to hand over independence. He organised people, built coalitions, and demanded it. Our approach to technology should carry that same spirit.

I'm not saying Western contributions don't matter. The Masakhane community is proof of what Pan-African collaboration produces when people show up. But something gets lost when the tools for your own language are built by people who don't speak it. A Dagbani speaker feels how noun suffixes shift across forms. A Yoruba speaker hears the tonal difference between 'Ọkọ́' the farming tool and 'Ọkọ' the family member without thinking about it. That kind of knowing lives in the body. You can't replace it with a research grant.

What this costs us

Search engines, autocomplete, recommendation systems, NLP pipelines; all of them assume your language has a stemmer, a tokeniser, a stopword list. If it doesn't, your language is invisible to software.

A Ghanaian student searching for learning materials in Twi will get worse results than a French student searching in French. Not because the Twi content doesn't exist. Because nobody built the tools to find it.

Every March 6, we celebrate independence. Flags, parades, speeches about the sacrifices that brought us self-governance. But digital self-governance, our languages existing fully in technology because we built the infrastructure ourselves, remains unfinished.

I need help

I'm not writing this to complain. I'm writing this because I need help.

If you work in computational linguistics, NLP, or language technology, and you speak an African language, you have something this work desperately needs. The translation and speech recognition efforts are a strong foundation. Now we need to go a layer deeper. Morphological rules for stemming. Tokenisers that handle tonal systems and suffix-based noun classes. Stopword lists built by native speakers and validated by linguists, then shipped inside the toolkits developers grab off the shelf every day.

It starts with the basics. How does your language form plurals? How do verb tenses work? What suffixes exist, and how do they combine? Those are the building blocks that everything else depends on.

I'm building this search engine in the open. It's already open-source, and there is much-needed work for it. I want African language support baked in from day one, not patched in later as a nice-to-have. But the linguistic work has to come first, and no one person can do it alone.

So here's my ask: let's talk. If you're a linguist, a developer, a researcher, or anyone who cares about this, reach out. Let's build on what Masakhane, NLP Ghana, and the African Stopwords Project have already started. Pick one language. Map its morphology. Build one stemmer. Then the next.

Ghana gained political independence 69 years ago today. Our languages still haven't gained digital independence. Let's change that. And let's do it ourselves.